Simulating Decision-Making with the Linear Ballistic Accumulator

I am proud to share a fantastic undergraduate research project completed by my student, Haley Daniel. In this work, we explored the mathematical modeling of human decision-making and response times, specifically focusing on the Linear Ballistic Accumulator (LBA) model introduced by Brown and Heathcote in 2008.

One of the most rewarding aspects of this project was the hands-on computational and theoretical work Haley undertook. We set out to not only independently derive the underlying probability distributions but also to build the model completely from scratch in Python, transitioning away from the original R implementations.

The Mechanics of the LBA Model

When forced to make a choice between two options, how do we decide, and how long does it take?

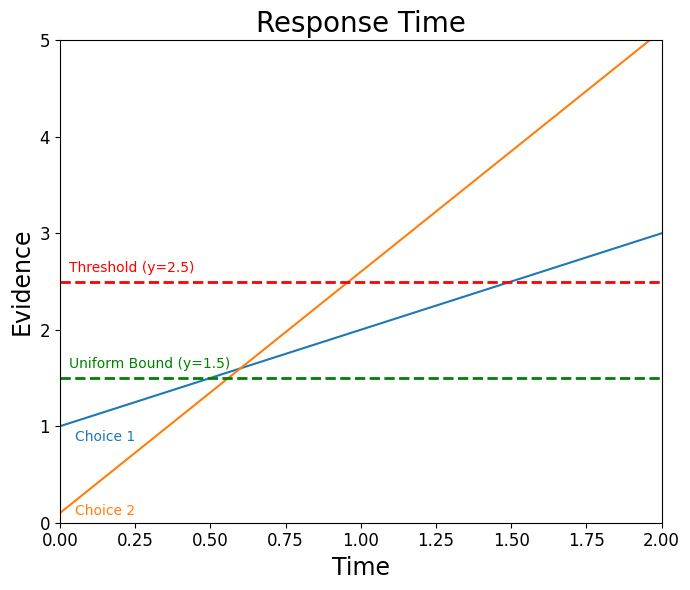

The LBA model represents this process using a “race” to a threshold. For a binary choice, there are two independent evidence accumulators. Each option starts with a random initial amount of evidence drawn from a uniform distribution, $X \sim \mathcal{U}(0,A)$.

From that starting point, evidence builds at a constant, linear speed called the drift rate, which is drawn from a normal distribution, $M \sim \mathcal{N}(v,s)$. The first accumulator to reach the threshold $b$ dictates both the choice that is made and the response time (RT).

What makes the LBA model so elegant is its simplicity. Unlike earlier diffusion models that incorporate moment-to-moment randomness, the LBA limits randomness strictly to the starting conditions, i.e. initial evidence and drift rate. Once those are set, the accumulation is entirely deterministic.

Deriving the Response Time Distributions

To fit this model to real data, we need the Probability Density Function (PDF) and Cumulative Distribution Function (CDF) of the response times. Because the accumulation is linear, the time taken for an accumulator to reach the threshold is simply the remaining distance divided by the drift rate.

We derived these distributions independently. For example, the PDF for the finishing time of a single accumulator $i$ is given by:

\[f_i(t) = \frac{1}{A}\left[ -v\Phi\!\left(\frac{b - A - \mu t}{st}\right) + s\phi\!\left(\frac{b - A - \mu t}{st}\right) + v\Phi\!\left(\frac{b - \mu t}{st}\right) - s\phi\!\left(\frac{b - \mu t}{st}\right) \right]\]Using these functions, we can construct the “defective” PDF—the probability that accumulator $i$ reaches the threshold first, effectively beating its competitors. This allows us to use Maximum Likelihood Estimation (via differential evolution) to fit the model parameters to experimental data.

Testing the Python Implementation

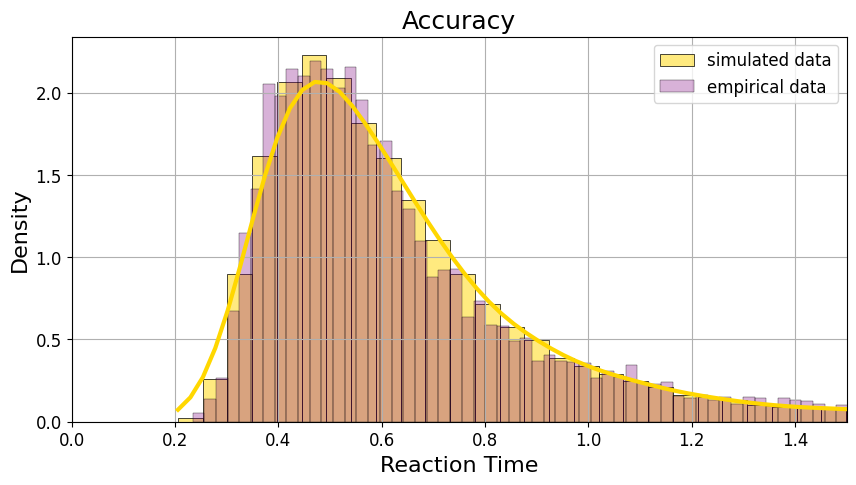

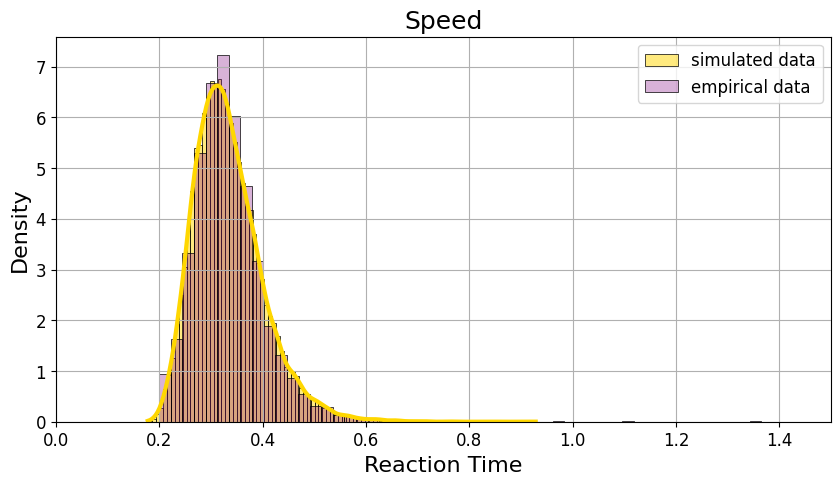

To validate our Python implementation, we applied the model to classic empirical data from a brightness discrimination task (Ratcliff and Rouder, 1998). In this experiment, participants classified pixels as “light” or “dark” under two different sets of instructions: one emphasizing accuracy, and the other emphasizing speed.

The results were excellent. By simulating 30,000 trials using our optimized parameters, our generated distributions closely tracked the empirical data.

When participants were told to focus on speed, the response distribution shifted significantly to the left and narrowed. Once again, the LBA model accurately predicted this shift.

It is incredibly rewarding to see theoretical probability and computational programming come together to predict human behavior so accurately!

Enjoy Reading This Article?

Here are some more articles you might like to read next: